There was a time when we needed to detect Google’s crawl rate faster than webmaster tools allowed to check. I was telling about the small re-incarnation of the BotStat idea using Python. The initial post was here. Right now it works for Nginx and Apache with detecting your access logs formats automatically (could be set manually through a command line or config file if we fail).

For instance, having this run by cron may give you a fresh report every morning when you come to your office. We tested it on a few WordPress instances of our friends’ blogs also.

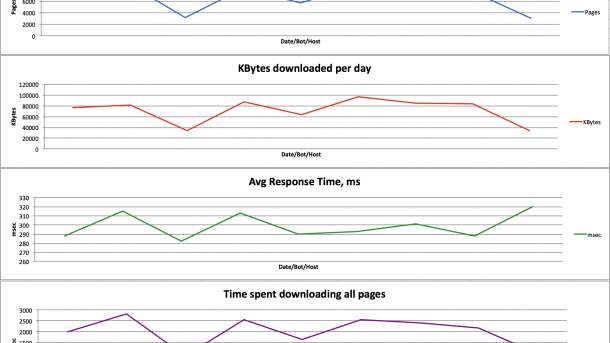

It is good when you get results raw (in CSV). However, there is always a chance to use Excel with graphs if you’re managing your resource actively and need to compare data. So, we just released the updated version where you can get a report as excel file with some graphs in common colors.

Also, filters from the “Data” sheet influence on charts. We’re working on multi-line graphs for Bot stats, so, for now, it is better to set filtering to one bot/one domain 🙂

There are simple three graphs from webmaster tools: Pages per day, KBytes per day and Average response time in milliseconds.

However, additionally to this, it was always interesting how much time does Google or other search engine bot spend on your website entirely on a daily basis:

Comparing trends of this few charts may give you some info 🙂

You can always check it out from Github: https://github.com/EndurantDevs/botstat-seo

Or install BotStat tool using pip:

pip install botstat

Good luck with your site SEO!

No Comments, Be The First!